EVA-CLIP-8B

Vanilla vs UniRefiner Vanilla

UniRefiner

Vanilla

UniRefiner

CVPR 2026

TL;DR

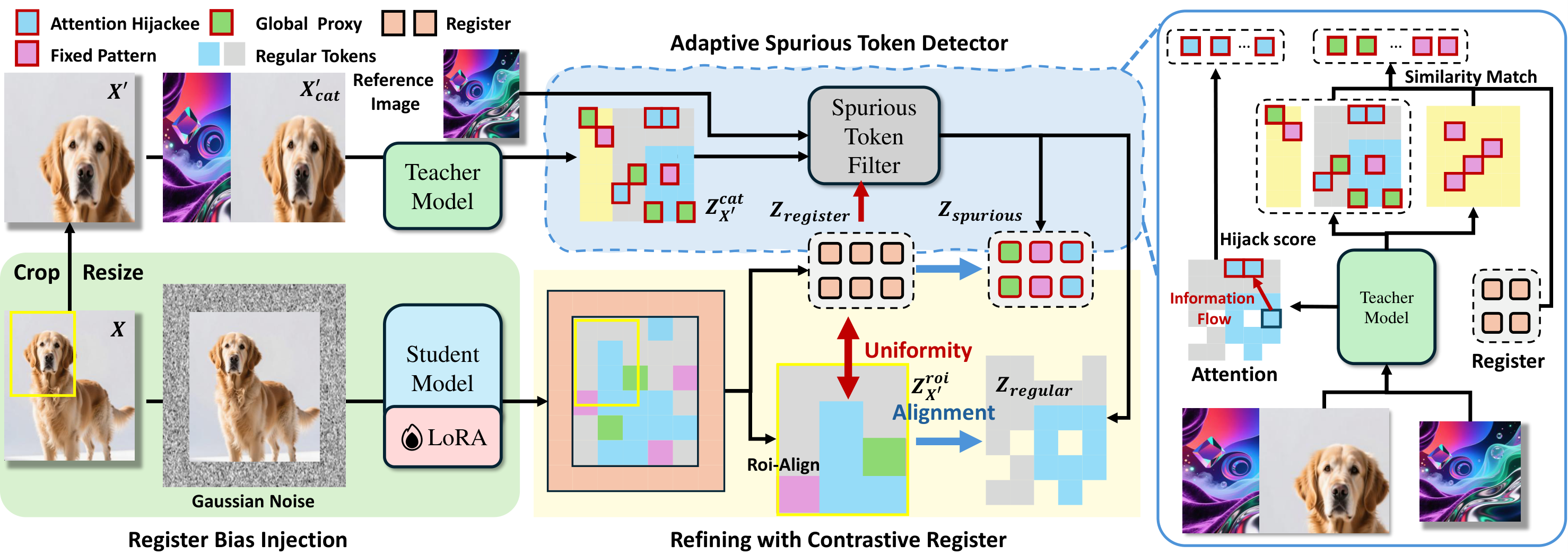

UniRefiner identifies any token that fails to preserve location-aligned semantics as spurious, then teaches pre-trained ViTs to preserve regular image tokens while pushing spurious responses into boundary registers, enabling lightweight post-hoc refinement of even 8B-scale models within roughly 30 minutes.

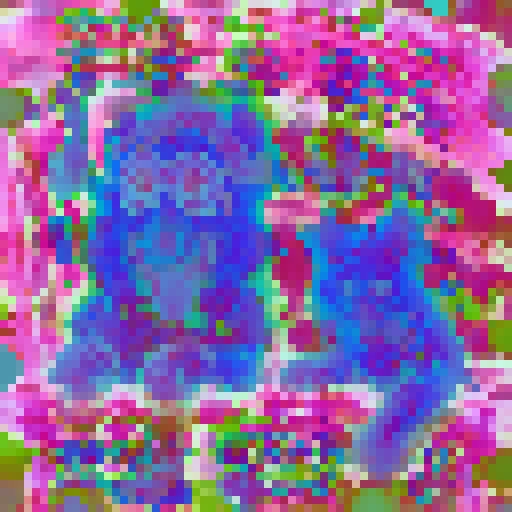

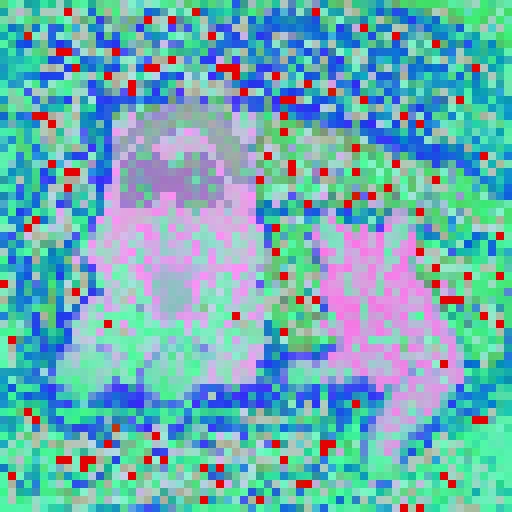

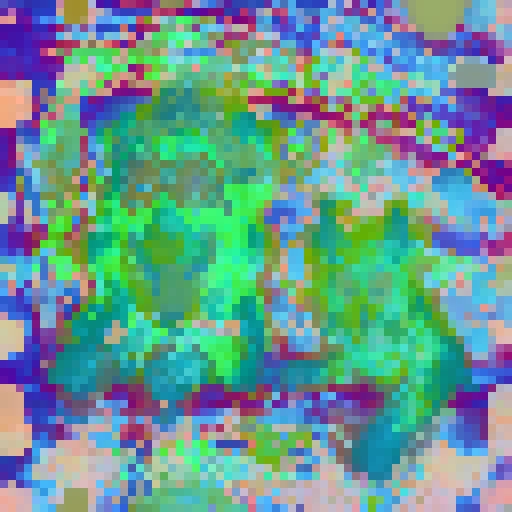

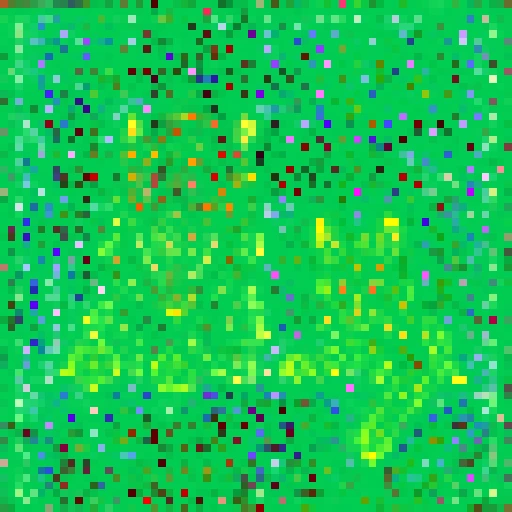

PCA dynamics throughout the refining process, comparing frozen vanilla features against UniRefiner under the same rendering setup.

Vanilla

UniRefiner

Vanilla

UniRefiner

Vanilla

UniRefiner

Vanilla

UniRefiner

Vanilla

UniRefiner

Vanilla

UniRefiner

Vanilla

UniRefiner

Vanilla

UniRefiner

Vanilla

UniRefiner

Vanilla

UniRefiner

Vanilla

UniRefiner

Vanilla

UniRefiner

* Qwen3 appears less stable because the spurious-token ratio drops sharply during refinement, making the PCA basis itself vary more noticeably.

Preliminary

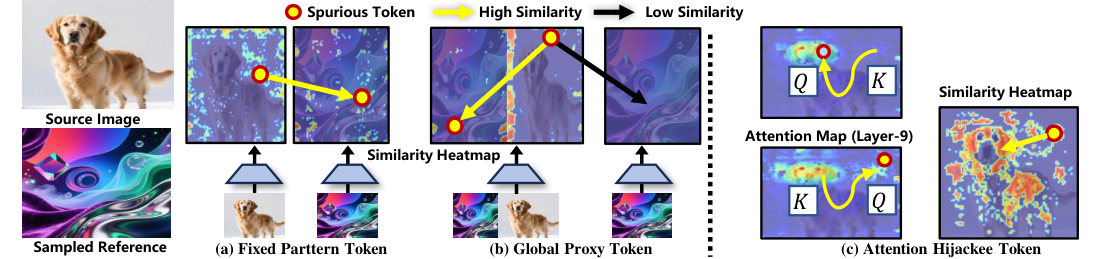

UniRefiner starts from a simple view: for dense prediction, any token that no longer preserves location-aligned semantics should be treated as spurious.

Tokens that remain similar across unrelated images instead of reflecting local visual content.

Tokens that drift toward scene-level context and stop encoding the semantics of their own spatial position.

Tokens that are overwritten by stronger neighbors through attention flow and lose their own spatial identity.

Method

Spurious token filtering. A frozen teacher branch separates regular and spurious tokens using similarity cues, attention flow, and register feedback.

Contrastive register distillation. Student image tokens align to filtered regular tokens, while boundary registers align to detected spurious tokens.

Adaptive refinement. As training progresses, learned registers become stronger spurious detectors and improve the next round of filtering.

Experiment

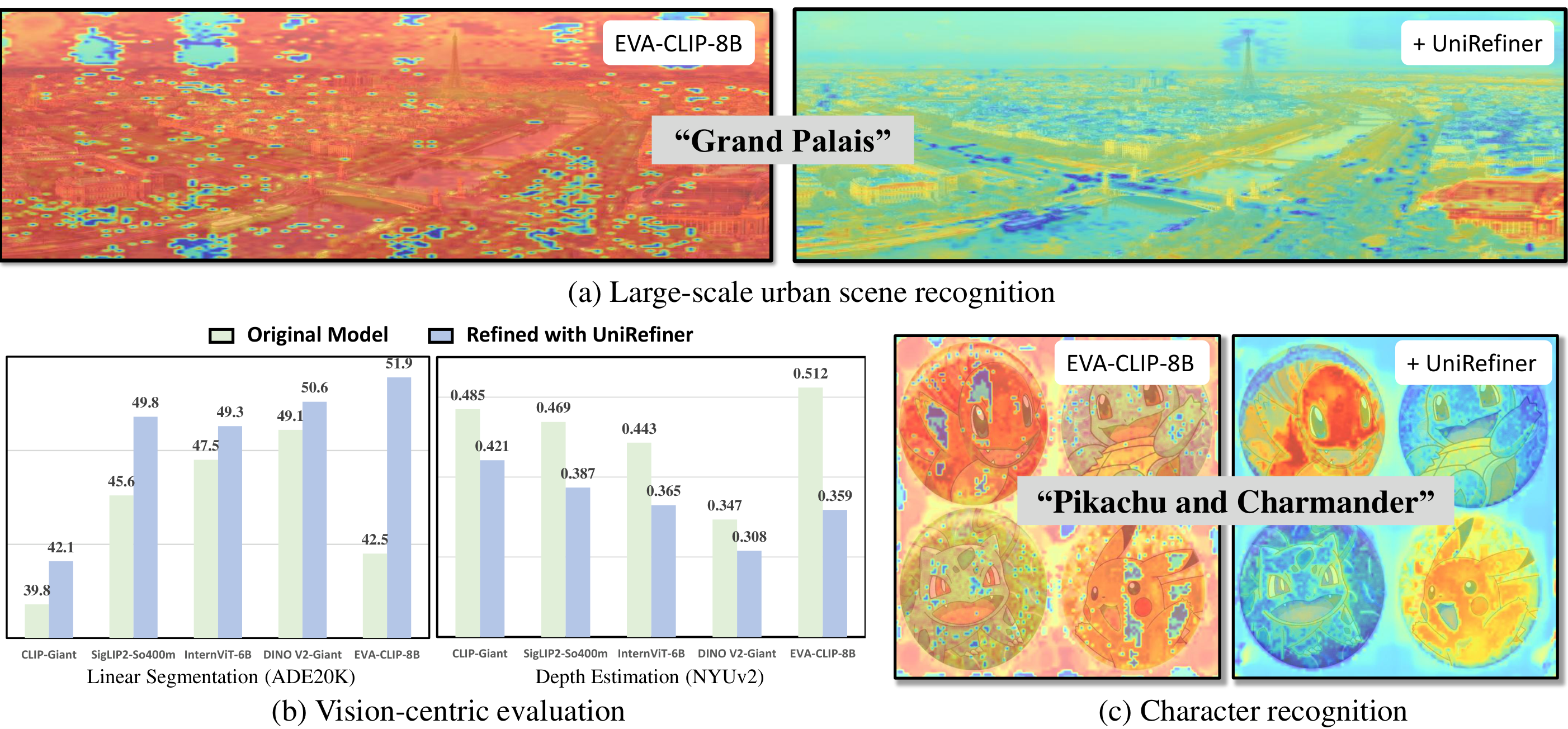

UniRefiner significantly improves dense prediction performance on both visual-centric and vision-language backbones. For the full set of quantitative and qualitative results, please refer to the paper.

Citation

% BibTeX placeholder

% The citation block will be added once the final proceedings metadata is available.